One afternoon in October, a strange

Honda CR-

V moves slowly in traffic in London.

Sitting on the steering wheel is Matthew Shaw, 32. year-

Old architect;

He was accompanied by a 30-year-old designer, William Trossell, and a small laser team.

Scanner operator.

They are skilled in technology, but their goal is art.

What they want to scan is not just the shape of the city streets, but the inner life of self-driving cars that could soon dominate the streets.

Shaw and Trossell were 3-

D. Scan since they met at construction School.

There, they looked at how laser scanners perceive the building environment, including the biases, blind spots and unique insights that any such technology must contain.

In 2010, they started the ScanLAB project, a design studio in London, to expand the scope of the investigation.

They already know, laser.

Scanning equipment can easily be fooled by the use or misuse of gears under inappropriate conditions.

Whether it's architectural ruins, geological forms, or commercial buildings in central London, solid objects are especially suitable for scanning.

Fog bank, fog and afternoon drizzle, not so much.

However, the early work of Trossel and Shaw was working precisely on this: pushing technology to unexpected areas where, by definition, things could not go as planned.

They installed laser scanners deep in the forest, and when the numbers blur over the landscape, they captured the low-ups and downs of fog clouds;

The mobile floating ice scanned from a ship north of the Arctic circle forms an overlapping maze on their hard drives, on the blurred edge, as if the horizon of the world had begun to bend.

These first projects, commissioned by the BBC and Greenpeace, have developed into a new approach: Mapping London through self-robot eyesxaddriving car.

One of the most important uses of 3-

In the next few years, the scan will be carried out entirely not by humans, but by self-driving vehicles.

The car has learned to drive by itself through the scanner

Pedestrian auxiliary braking

Parallel detection sensor

Lane parking support-

Departure warning and other complicated drivers

Assistance systems and full autonomy are coming soon. Google’s self-

Driving a car has traveled millions of miles on a public road;

Tesla's Elon Musk says he may have a driverless passenger car by 2018;

The Association of Electrical and Electronic Engineers says self-driving cars will account for 75% of the cars on the road by 2040. ’’ Driver-

Control cars have reshaped the world in the last century, and there is good reason to expect driverless cars to reinvent the world again in the coming century: as cars follow an alternative route of cooperative development, traffic jams may disappear if information crosses the Internet.

With a competitor's robotic car just a little bit, the demand for street parking may disappear, and this demand may be reduced by third in the entire surface area of some major cities in the United States.

When distracted drivers are replaced by non-flashing machines, roads can become safer for everyone.

But it all depends on whether the car can travel in the building environment.

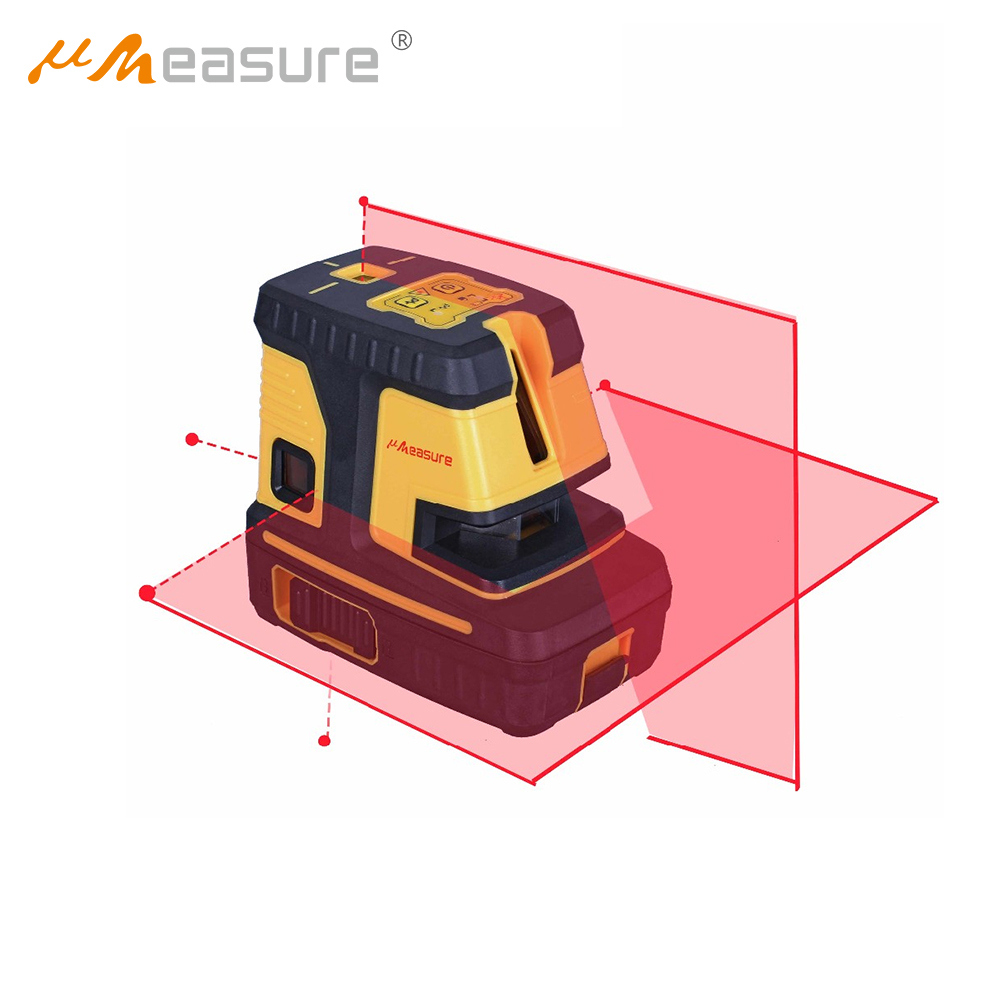

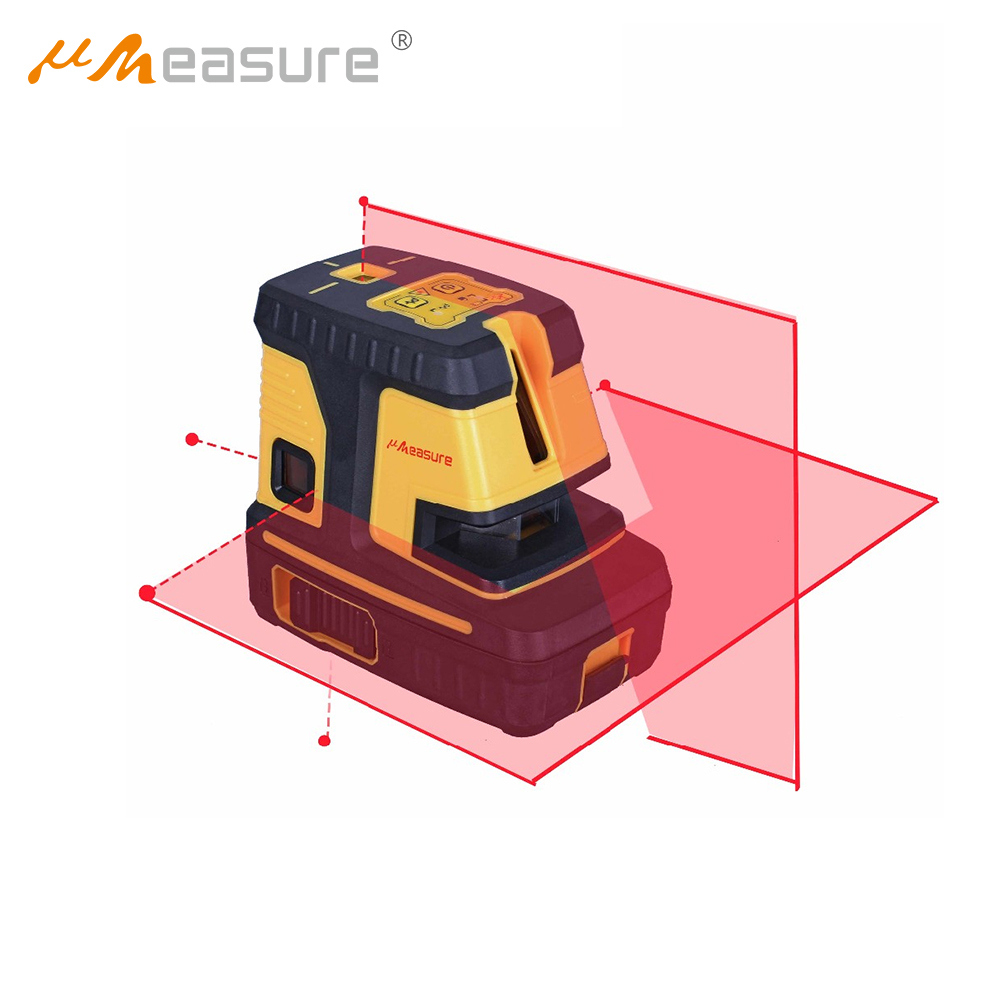

Now the cars that Google, BMW, Ford and others are testing are all seen through a special scanning system called lidar (

A portmanteau composed of "light" and "radar).

Lidar scanners send out tiny flashes that are invisible to the human eye, almost millions of times per second, bouncing back from every building, object and person in the area.

This machine

Flashing is very detailed, mm-

Measuring the scale of the surrounding environment is much more accurate than anything the human eye can achieve.

Capture is like photography, but it is very large and produces a complete three

3D model of the scene.

The extreme accuracy of lidar provides an absolutely objective air for it;

Clean scanning of fixed structures can be so precise that non-profit organizations such as CyArk have been using lidar as a tool for archaeological protection in conflict areas, hoping that in-

Before being destroyed, there are historic risk locations.

However, lidar has its own defects and weaknesses.

It can be thrown away by reflective surfaces or rain drops in bad weather, mirror glass, or thunderstorms in the morning.

With the emergence of the first wave of self-driving cars, engineers are struggling with complex or even absurd environments that make up everyday street life.

Think about the riders in Austin, Texas.

He found himself in a strange standoff with one of Google's employees. xaddriving cars.

To a four.

Just a few seconds after the car, the cyclist gave up his right of way.

Instead of stopping completely, however, he performed a track and field stand, moving back and forth without putting his feet on the ground.

The car was paralyzed by indecision, reflecting the rider's own movements --

Keep moving forward and stopping, keep moving forward and stopping

Not sure if the cyclists will enter the crossroads.

As the cyclist later wrote in an online forum, "There are two people in it laughing and punching in the laptop, I would like to try to modify some code to "teach" the car how to deal with this situation.

"Illah Nourbakhsh, a professor of robotics at Carnegie Mellon University and author of the book robot future," describes such a strange event with the metaphor of a perfect storm that there is no programming or image --

One can expect the recognition technology to understand it.

Imagine someone wearing a T-shirt.

He told me that a stop sign was printed on the shirt.

"If they are walking outside, the sun is right at the right level of glare, and a mirror truck is parked next to you, and the sun bounces from the truck and hits that person, so you can't see his face --

Now your car sees the parking sign.

All these are less likely to happen.

This is very, very impossible.

But the problem is that we will have millions of such cars.

It is unlikely to happen all the time.

"Moving slowly in traffic at Tower Bridge in London, the mobile laser scanner has accumulated a lot of data that is often corrected by algorithms to turn the bridge into a light tunnel.

The mobile laser scanner moves slowly in the traffic of Tower Bridge in London, accumulating a layer of data that is usually corrected by algorithms to turn the bridge into a light tunnel.

ScanLAB mobile devices are modeled straight through the maze of paths in London.

Norman Foster's "gherkin" building appeared twice because the building went through twice and produced a scanner Cubism.

The mobile scanner was trapped in traffic and accidentally recorded a London double.

As a large continuous bus

Structure, and at the same time extend the surrounding city world like toffee.

Nourbakhsh explained that the sensory limitations of these vehicles must be taken into account, especially in a city world full of complex building forms, reflective surfaces, unpredictable weather and temporary construction sites

This means that in order to adapt to the particular way the car experiences the building environment, the city may need to be redesigned, or simple changes may occur over time.

The other side of this example is that there are different versions of the urban world in these brief moments of misinterpretation: parallel landscapes that only machines can see --

Perception Technology, in which invisible objects and signs of human beings still have real effects in the operation of cities.

If we can learn from human error perception, maybe we can learn something from the illusion and illusion of perception machines. But what?

All the signs of anger, reflection and misperception that Nourbakhsh has warned are exactly what ScanLAB is trying to capture now.

Xiao said that their goal is to explore the peripheral vision of driverless cars, or what he calls "sideline ", auto and their non-flashing scanners will never be careful to see the edges of the city being ignored.

By deliberately disabling certain aspects of the scanner sensor, ScanLAB finds that they can adjust the device to reveal its neglected artistic potential. While a self-

Driving a car often uses corrective algorithms to explain problems such as long-term traffic jams, Trossell, and Shaw, rather than letting those defects accumulate.

The moment of casual information density becomes a part of the aesthetic sense that arises from it.

Their work reveals that London is a landscape of ancient monuments and gorgeous buildings, as well as a landscape plagued by copying and digital ghosts.

The double of this city

The double-decker bus scanned over and over turned into time-

Extended to the giant without features

Block the entire street at once.

Other buildings seem to be repeating and stuttering, and the riots in the parliament building are shoulder to shoulder with them in the distance.

The staff preparing for a lunch time walk turned into a spectral profile that appeared with the deviation of the edge of the image.

The glass tower dispersed into the sky like a cigarette.

Trossell calls it "crazy machine illusion" as if he and Shaw woke up some kind of Frankenstein monster in the most advanced imaging technology in the automotive industry.

ScanLAB's project shows that humans are not the only things that perceive and experience modern landscapes right now --

There are other things here that have a completely different, fundamentally inhuman view of the building environment.

If the conceptual premise of the Romantic movement can be roughly described as the experience and record of extreme landscapes --

As an art of remote peaks, deep-sea valleys and vast uninhabited lands

ScanLAB then suggested that a new romanticism is emerging through the perception package of autonomous machines.

When artists have traveled long distances and seen lofty and magnificent scenes, the same wonderful and disturbing scenes can now be found in the means of travel itself.

As we gaze at the algorithmic dreams of these vehicles, we may also see what happens when they wake up for the first time.